A couple of years ago I was approached by an American publisher about the possibility of writing a general reference/textbook that covered Web 2.0 and Social Media. It followed on from the success of a report I wrote for JISC in 2007, which was written for both technical and non-technical readers, and the publishers wanted something similar, but more of it.

A couple of years ago I was approached by an American publisher about the possibility of writing a general reference/textbook that covered Web 2.0 and Social Media. It followed on from the success of a report I wrote for JISC in 2007, which was written for both technical and non-technical readers, and the publishers wanted something similar, but more of it.

Well yesterday a friend rang to ask if I knew that the ‘buy’ link had been activated on Amazon, so I guess I can say that my book, Web 2.0 and Beyond (published by Chapman & Hall/CRC, a computer science imprint of Taylor & Francis), is well and truly published.

The remit was challenging – CRC were developing a new series, aimed at reinventing the textbook format. Their point was that, increasingly, it is students from business studies, economics, law, media studies, psychology etc. who want to understand what CompSci is up to but who don’t necessarily have the deep technical knowledge to really understand how the technology came to be or what the implications of it are. However, as CRC is primarily a computer science imprint they also didn’t want to compromise on the requirements of their primary audience.

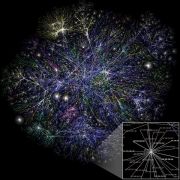

I was particularly interested in this idea because studying social media is increasingly becoming an interdisciplinary melting pot. Also, having taught computer science I was keen for students to have a well-rounded sense of the discipline – that they should have a sense of context rather than just learn how to write code. I could also see parallels with Web Science, the study of the Web as the world’s largest and most complex engineered environment (which at the time was only just starting to emerge), and I thought that if ever there was going to be a moment when it was possible to bring all this together in one book, it would be now.

The tricky thing, of course, was getting it all to come together. With the help of some extremely skilful editing I think what we’ve done is to obey three golden rules: only tell readers what they need to know at that point in time; use narrative techniques that engage the reader and allow them to read through the filter of their own discipline; and to keep highly specialised information (hard-core technical information, overviews of research etc.) in separate sections and chapters.

The framework for all of this is the ‘iceberg model’, which tackles Web 2.0 using a layered approach. The premise of the book is that if you understand the iceberg model you will be better equipped to understand how the Web is likely to evolve in the future. There are, of course, a few pointers as to what that might look like.

In the spirit of Web 2.0 there are also various information sources associated with the book. There’s a YouTube channel where I post information about relevant videos, and you can find out about these if you subscribe to the book’s Twitter feed (@web2andbeyond) where I also post other snippets of relevant information that help to keep the book fresh. More detailed information is on the book’s Facebook page (www.facebook.com/web2andbeyond), which also includes notes and excerpts to give a taste of the narrative style of writing I mentioned earlier.

It has been a while in the making and part of me still can’t believe that it’s actually here, but it is, so now all I need is for people to buy it. Hint hint.

This site is now an archive

March 18, 2019“The dogs bark but the caravan moves on”

André Gide (quoting an old proverb)

This site is now officially in archive mode.

I’ve not posted anything for over a year and have been working on non-technology writing projects in recent months.

It’s possible I will return at some point, but in the meantime, it represents a good summary of the technological mischief I was getting up to in the years 2007-2018. During that time I was a technology futurist for some major, national clients including JISC and a freelance technology writer. In 2012, my book, ‘Web 2.0 and beyond’ was published.

If you need to contact me, the details are in the ‘About the author’ page.

Posted in Comment, My Published Work | 2 Comments »